Building Scalable Data Pipelines for AI Enablement: Skip the Opportunity Cost of Speed in Pursuit of the Value of Trust

- James Gowen Jr., Ph.D.

- Apr 21

- 7 min read

IA FORUM MEMBER INSIGHTS: ARTICLE

By James Gowen Jr., Ph.D., Global Head of Data Engineering Controls - Data Quality Platforms, CITI

World Economic Forum author Campbell (2026) confirms the rapid pace at which Artificial Intelligence (AI) is advancing in the marketplace. However, as the industry strives to integrate and enable Artificial General Intelligence (AGI) assets into their work environments, practitioners must remain vigilant to challenge the “AI Hype” that often follows innovation and early adoption (Sarkar, 2026; Siegel, 2023). Clever sayings and the ‘accelerated fail fast, fail often’ philosophy of “shipping fast” in the name of innovation and speed are dangerous when extended to building scalable data pipelines that need to transport these capabilities (Campbell, 2026; Ewing, 2026; Pontefract, 2018).

What Are Data Pipelines?

Data pipelines are the assembly lines that capture, process, transform, transport, and store information from its source to its destination across the value chain. Data pipelines are a critical component in today’s industry as we advance into the next stage of data readiness, racing toward artificial general intelligence (AGI) to artificial super intelligence (ASI) (Campbell, 2026; Foidl et al., 2024; Subramanian, 2025). Building AI capabilities on weak foundations derails transformation and innovation, impedes strategic growth and scalability, and erodes trust (Cheah, 2026; Foidl et al., 2024; Hitchcock, 2025). Research indicates these same pipelines have often been deemed unreliable and untrustworthy in terms of their ability to deliver quality data (Campbell, 2026; Foidl et al., 2024). Sarkar’s study (2026) showed 74% of companies failed to deliver in their AI initiatives despite record investment (Pontefract, 2018; Sarkar, 2026).

It is the equivalent analogy of quickly building a beautiful city with high-rise architectural towers, office buildings, and paved roads with populated personnel, only to discover later that the city lacks sufficient plumbing and sewer systems throughout the city. As a result, the city needs to be retrenched and reconstructed while it is still operating. While the city's appearance may be impressive, the important criterion to consider is whether its entire operation functions effectively. The architectural towers represent modern-day AI models and capabilities developed from the office buildings (applications), while the roads serve as your dashboards. The missing plumbing infrastructure below is your data governance and controls to ensure data quality, readiness, and sustainability, designed to support the city in the present and for future expansion.

Root causes vary, but most business leaders cite poor data quality, availability, and some form of data architecture as contributing challenges to readiness and AI-accelerated adoption (Campbell, 2026; Hitchcock, 2025). When companies fail to deploy effective internal data quality controls, they harm their customers and expose their shareholders and themselves to operational and reputational risk, along with fines, judgments, and civil penalties for starters. Afterwards, they spend additional time and resources on the remediation and repair of the markets they serve (Ennis, 2020; Hurley & Hurley, 2020; Rossi, 2023). In some cases, these companies never regain certain customers, such as those lost in the case of the Wells Fargo account opening scandal (Hurley & Hurley, 2020). A classic example of the importance and need for high-quality data in our systems is the JPMorgan London Whale trading incident, which was a single incident that cost over $6 billion in losses due to a trading formula error (Packham & Woebbeking, 2018; Schwimmer, 2025). In total, JPMorgan paid approximately $1 billion in fines and judgment penalties as part of a global settlement. This amount did not include the total remediation cost of legal, operational, and reputational repair, which was also incurred (Packham & Woebbeking, 2018). The cost of poor quality is often multi-layered, with the penalty itself followed by the additional remediation required.

Types of Data Pipelines

Foidl et al. (2024) noted that there are various types of data pipelines based on strategy, method, and use case. Some types are classified by ingestion, such as batch mode and real-time running mode, where data is processed by interval or triggered event. Other pipeline types are organized by job methods for application processes, such as Extract, Transform, Load (ETL), and Extract, Load, Transform (ELT), which are the two most common methods used to transfer data into warehouses, data lakes, and cloud storage settings. Finally, data pipeline use case types are for data collection and input preprocessing of data science, AI, or machine learning (ML) applications and models.

Scalability Factors

Data pipeline scalability is interdependent and influenced by several factors, ranging from the data itself, the type of development methodologies used, deployment processes, infrastructure, and overall lifecycle management, which includes performance monitoring, lineage, and application configuration management (Foidl et al., 2024; Hitchcock, 2025). Many architectural issues stem from legacy environments designed to support transactional applications instead of environments that now may demand both structured and unstructured data needed to optimize AI systems and capabilities. These structures often require some form of migration or transformation along with the rebuild of foundational validation, lineage, security, and persistent monitoring control. These supporting activities ensure that various types of data sources and models can be supported with flexibility and adaptability without sacrificing the core tenets of data quality: accuracy, completeness, and timeliness (Cosic, 2026; Foidl et al., 2024; Schwimmer, 2025).

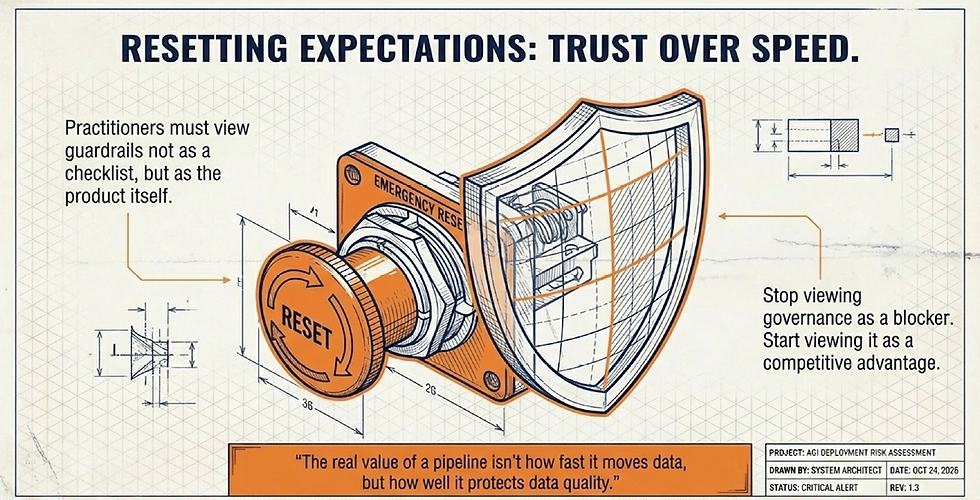

Call to Action: Implement Sound Principles

Practitioners should see these challenges as opportunities to respond, reset expectations, and re-establish guardrails to support our data pipeline environments. There are sound principles that practitioners can begin to implement and advocate for to help their organizations become strategically competitive in adopting AI projects that deliver value to the company. Two key principled actions are to incorporate both data and AI governance controls and build out a smart data architecture (Basso & Romer, 2026; Hitchcock, 2025; Mäntymäki et al., 2022). There are many others, but starting with these will reset expectations that appropriate guardrails will now be part of everyday processes and not just a checklist to complete.

Start With Unified AI & Data Governance

Overall, unified AI and data governance is the combined authority, control, and management of both data and AI designed to minimize their risk and increase their overall value to the firm (Abraham et al., 2019; Subramanian, 2025). Nevala (2019) recommended that the intersection of AI and data governance serve as both an enabler and a beneficiary of each other. When properly invested, data serves as a strategic asset that becomes the catalyst for business outcomes. Policies, principles, practices, and processes that are used to better curate, clean, and connect data to generate and expedite insights become the catalyst to further use AI technology ethically and effectively within compliance (Mäntymäki et al., 2022; Nevala, 2019). This includes the use of data contracts and ownership to ensure each data product domain has clear accountability and stewardship (Mudusu, 2025).

Build Out Cognitive Data Architecture

Secondly, build out smart data architectures such as semantic data layers, which use knowledge graphs, business metadata, and information taxonomy explainability frameworks to prevent models from hallucinating or producing misinformation (Mudusu, 2025; Subramanian, 2025). This action will ensure enhanced transparency and support the overall ability to catalog and connect the embedded lineage and mapping of how data is used and travels throughout the pipeline architecture. These new systems are classified as cognitive data architecture, which are optimized frameworks considered active, intelligent, and designed for AI-enabled scalability (Karlapalem et al., 2025; Mudusu, 2025).

Collectively, incorporating these principles is just the beginning. Teams that begin to view governance as an 'accelerator' will be able to make faster decisions ethically and with greater control than their competitors. In the long run, to maximize AI enablement effectively, the real value of data pipelines won’t be how fast they can be built to move data, but how well they can be designed and how resilient they are to protect the data with quality as a trusted architecture.

Author Disclaimer: The views and opinions expressed herein are those of the Author alone and are shared in a personal capacity, in accordance with the Chatham House Rule. They do not reflect the official views or positions of the Author’s employer, organization, or any affiliated entity.

References

Abraham, R., Schneider, J., & vom Brocke, J. (2019), Data Governance: A Conceptual Framework and Research Agenda - International Journal of Information Management

Basso, M., & Romer, M. (2026), How AI-first Enterprises and Operating Models Unlock Scalable Value - World Economic Forum

Campbell, K. (2026), Why Data Readiness is a Strategic Imperative for Businesses - World Economic Forum

Cheah, Z. (2026), The Emerging Enterprise AI Stack is Missing a Trust Layer - CIO

Cosic, R. (2026), Why CIOs Need Analytics Capability to Scale AI - CIO

Ennis, D. (2020), Citi Fined $400M Over Risk Management and Data Governance Issues - BankingDive

Ewing, R. (2026), Hey, Senior PMs: Shipping Faster Won’t Get You Promoted - CIO

Foidl, H., Golendukhina, V., Ramler, R., & Felderer, M. (2024), Data Pipeline Quality: Influencing Factors and Root Causes of Data-Related Issues - Journal of Systems and Software

Hitchcock, T. (2025), Designing the Agent-Ready Data Stack - InfoWorld

Hurley, P., & Hurley, R. (2020), Lessons from the Wells Fargo Banking Scandal - Academy of Business Research Journal

Karlapalem, K., Krishna, P. R., & Valluri, S. R. (2025), Data Fabric Technologies and Applications - CODS-COMAD

Mäntymäki, M., Minkkinen, M., Birkstedt, T., & Viljanen, M. (2022), Defining Organizational AI Governance - AI and Ethics

Mudusu, S. K. (2025), Cognitive Data Architecture: Designing Self-Optimizing Frameworks for Scalable AI Systems - CIO

Nevala, K. (2019), Opportunity and Threat: The Intersection of AI and Data Governance - Big Data Quarterly

Packham, N., & Woebbeking, F. (2018), The London Whale - SSRN

Pontefract, D. (2018), The Foolishness of Fail Fast, Fail Often - Forbes

Rossi, C. (2023), Silicon Valley Bank: A Failure in Risk Management - Global Association of Risk Professionals

Sarkar, A. (2026), From AI Hype to Workflow Reality: A Strategic Framework for Integrating Generative AI Across Organizational Functions - Organizational Dynamics

Schwimmer, D. (2025), Why the Global Financial System Needs High-Quality Data It Can Trust - World Economic Forum

Siegel, E. (2023), The AI Hype Cycle is Distracting Companies - Harvard Business Review

Subramanian, H. (2025), The Case for a Unified Approach to AI and Data Governance - CIO

Comments