Designing Data Strategy for Sustainable Alpha in Capital Markets

- Navita Singh

- Apr 5

- 6 min read

IA FORUM MEMBER INSIGHTS: ARTICLE

By Navita Singh, Principal Data Scientist, Autodesk Research, AUTODESK

Data Strategy: An Introduction

In capital markets, data strategy must be agile to keep pace with rapidly evolving market regimes and regulatory landscapes. It must support sustainable, scalable returns while limiting large drawdowns. A common performance metric is maintaining a Sharpe ratio of approximately two or higher. This implies that the standard deviation of returns must be equal to or below half of the average returns. Given the large assets under management in capital markets, strategies often chase a limited number of profitable opportunities, which may become overcrowded. Market microstructure is also a critical factor. While small-cap equities may be available, they do not permit significant capital to be deployed profitably. Corporate bond markets are predominantly traded over the counter. For them, valuation methodology and counterparty risk must therefore be explicitly incorporated into the strategy design.

Consequently, investment firms shape their data strategy around these considerations. Key questions include how to collect data that provides a competitive advantage and how to ingest it rapidly so that traders can act before markets open in the morning. A modern data strategy in capital markets represents a deliberate alignment between data collection, decision-making, and capital deployment. When implemented effectively, a data strategy becomes an engine for continuous adaptation, enabling trading organizations to compete as market regimes evolve.

Firms frequently treat data strategy as a technology initiative rather than a firmwide strategy. Large investments are made in data lakes, real-time feeds, and analytics platforms without a clear connection to trading and business outcomes. The result is that business objectives suffer, and trading desks operate in silos separate from technology teams. Leading organizations take a different approach by first identifying the decisions that truly drive alpha and then designing their data ecosystems to support those decisions directly.

Data Challenges Faced

Despite significant investment in data and analytics, capital markets teams continue to face persistent challenges in data collection. One of the most common issues is data fragmentation. Research teams, quantitative modelers, and electronic trading desks often operate with separate datasets, tools, and workflows. Expensive data feeds and streaming news subscriptions are frequently limited to a small group of users. While specialization is necessary, this separation often leads to duplicated effort and missed opportunities to compound insights across the organization.

If a feature is widely known across multiple organizations, it no longer represents alpha; instead, it becomes part of the expected market return, or beta. This is why many trading desks rely on proprietary data or alternative feature engineering methods to construct even well-known factors such as value or momentum. For example, when calculating value using price-to-book or price-to-earnings ratios, firms may rely on internal research earnings estimates instead of consensus estimates that are available to most external teams. This approach provides differentiated exposure and helps reduce strategy overcrowding.

A more severe risk emerges when many desks pursue highly similar strategies concurrently. This can lead to short-term squeezes as market participants attempt to unwind similar liquid positions simultaneously, as observed during the quantitative crisis of August 2007. Hence, alternative sources of alpha, derived from multimodal data and sentiment data extracted from regional languages, can become meaningful long-term differentiators.

Data quality issues must also be addressed before back-testing strategies. Inaccurate corporate action adjustments, inconsistent security identifiers, missing timestamps, and unannounced vendor changes can introduce subtle distortions that may not be immediately visible during development but can materially affect live trading performance. Strong domain expertise helps to identify and manage these risks. Regular engagement with data providers plays an important role in maintaining data integrity.

Synergy Among Quant Research, Electronic Trading & Quant Trading

One of the most underutilized sources of alpha in capital markets lies in improving synergy among quantitative research, quantitative trading, and electronic execution teams. Although these functions have distinct mandates, they are deeply interconnected and own related but fragmented data. Research publishes ideas for both internal and external consumption, trading desks trade on their proprietary ideas, and electronic trading develops algorithms to minimize execution costs. When these teams operate on disconnected data foundations, their ability to optimize the full trade lifecycle is limited.

Organizations that prioritize cross-functional data integration enable processes that allow insights to flow freely. Common market data and corporate action handling provide a baseline for collaboration, but true synergy emerges when trading signals, features, and performance metrics are standardized and accessible across teams. Achieving this level of integration requires more than common technology tools. It flourishes under cultural alignment and leadership commitment. Teams must be incentivized to share insights and collaborate, rather than optimizing solely for their own performance metrics. When data is treated as a shared strategic asset, the organization as a whole becomes more responsive and resilient.

Data Storage & Management

Historically, financial market data systems were anchored to on-premises data centers and specialized, high-cost hardware. Data was typically stored in monolithic Relational Databases (RDBMS) or high-performance time-series databases, for example, KDB+. Scaling was a slow, manual process; if volume increased, engineers had to physically rack new servers, a process that could take weeks. Any hardware failure or sudden market spike could lead to significant downtime or dropped ticks.

The Cloud Native Platform replaces fixed hardware with virtualized environments like Kubernetes, allowing resources to scale elastically during peak market volatility. Real-time ingestion is managed by distributed event streaming platforms like Apache Kafka, which act as a high-throughput buffer for downstream consumers. Data is no longer trapped in silos but is consolidated into Data Lakes using open formats like Parquet or Delta Lake, stored on cost-effective object storage (S3/Blob). High-frequency insights can be broadcast to quantitative teams globally, supported by automated observability stacks like Prometheus and Grafana.

Data Governance

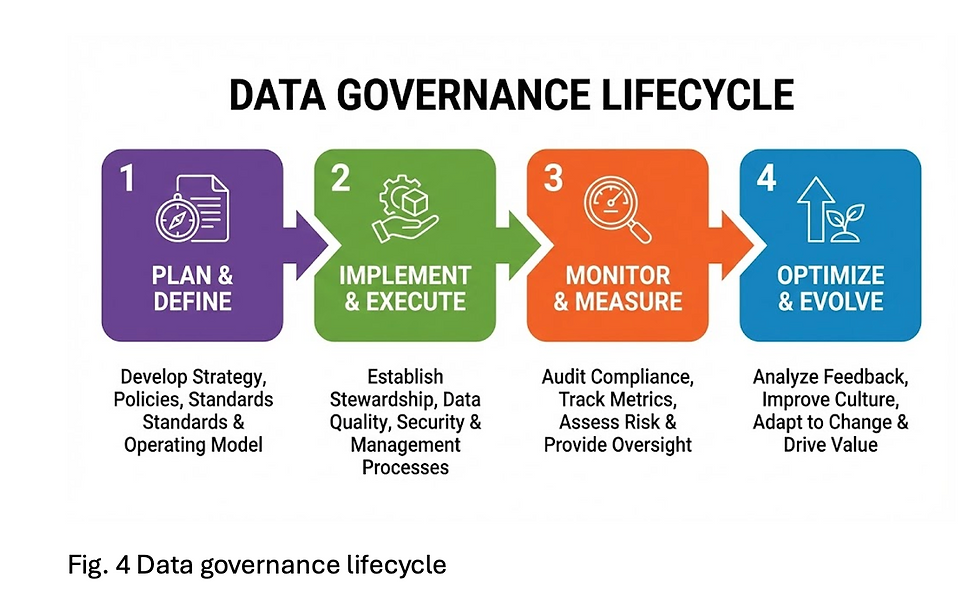

Data governance in capital markets is sometimes viewed as a barrier to speed and innovation. In reality, weak governance is one of the largest hidden sources of inefficiency. Capital markets are also one of the most heavily regulated industries, where data failures can lead to severe consequences. When data ownership is unclear, lineage is undocumented, and quality issues can surface late.

Strong data governance creates a foundation of trust and enforces accountability. Clear data lineage supports regulatory obligations and audits. Quality controls aligned with trading impact allow teams to focus on the issues that require immediate resolution. Leading firms embed governance directly into data pipelines and analytical workflows. Automation reduces manual oversight and allows governance practices to scale as systems grow more complex. Governance does not slow teams down when designed thoughtfully. Instead, it enables faster decision-making with greater confidence.

The Rise of LLMs & Additional Tools

The emergence of large language models and AI-assisted tools represents a significant shift in how data and knowledge can be leveraged in capital markets. While LLMs are unlikely to replace traditional quantitative models in generating alpha strategies, they offer powerful tools as accelerators across the analytical lifecycle. They can help synthesize unstructured information, perform sentiment analysis from social media feeds and extract historical context through Retrieval Augmented Generation (RAG). When analyzing the stock of a global company with operations in multiple countries, LLMs can aid researchers in translating and summarizing news published in local languages.

These tools also present new challenges. Issues related to explainability, hallucination, and data leakage are particularly acute in regulated trading environments. As a result, the adoption of LLMs must be carefully governed and tightly integrated into existing data and risk frameworks. Firms that succeed will be those that treat LLMs not as standalone solutions, but as components within a broader data strategy. When combined with strong governance, disciplined experimentation, and clear decision alignment, these tools can enhance insights without compromising rigor.

Conclusion

Sustainable performance in capital markets will not come from chasing crowded signals. It will come from building adaptive systems where data, analytics, and governance reinforce one another in a disciplined and continuous feedback loop.

Data strategy, when treated as a strategic capability rather than a siloed technical initiative, becomes more than infrastructure. It becomes competitive architecture, a foundation that enables organizations to adapt as regimes shift.

Author Disclaimer: The views and opinions expressed herein are those of the Author alone and are shared in a personal capacity, in accordance with the Chatham House Rule. They do not reflect the official views or positions of the Author’s employer, organization, or any affiliated entity.

Comments