On-Device AI for Trust, Privacy & Real-Time Decisions in Next-Gen FinTech

- Nitin Sharma

- Apr 5

- 5 min read

IA FORUM MEMBER INSIGHTS: ARTICLE

By Nitin Sharma, Senior Manager, Digital Product Solution Design, AMERICAN EXPRESS

As financial ecosystems evolve, so do customer expectations. Today’s consumers demand instant credit decisions, seamless digital experiences, uncompromised privacy, and transparent trust. In an era where milliseconds define competitive advantage, traditional cloud-first AI architectures are increasingly strained.

While cloud computing has enabled large-scale model training and centralized intelligence, it struggles to meet rising expectations for real-time decision-making without introducing latency, privacy exposure, regulatory complexity, and escalating infrastructure costs.

A transformative paradigm is emerging: On-Device AI - intelligence embedded directly within user devices, enabling secure, private, and real-time decision-making at the edge.

This article explores why On-Device AI is strategically significant for financial services, how a hybrid architecture unlocks its full potential, the most compelling FinTech use cases, technical and governance challenges, and the future research directions shaping this next wave of distributed intelligence.

Why On-Device AI?

Financial services operate in one of the most high-stakes digital environments in the global economy.

Decisions must occur in milliseconds

Fraud patterns evolve dynamically

Regulatory scrutiny around data privacy is intensifying

Customers expect frictionless, invisible security

Traditional cloud-centric AI architectures introduce unavoidable trade-offs:

Network latency from cloud roundtrips

Centralized data exposure, increasing breach surface area

High compute and infrastructure costs at scale

Slower feedback loops between user behavior and model response

In fraud prevention alone, a delay of even a few hundred milliseconds can determine whether a fraudulent transaction is blocked or approved. Similarly, personalization models that rely solely on remote processing can lose contextual nuance that exists only momentarily on a user’s device.

On-Device AI addresses these constraints by shifting inference closer to where data is generated:

Running lightweight AI models directly on smartphones and edge hardware

Minimizing dependency on constant cloud connectivity

Enhancing privacy through data minimization and local processing

Enabling real-time, context-aware decision-making

The result is not merely faster AI - but more adaptive, resilient, and privacy-aware intelligence.

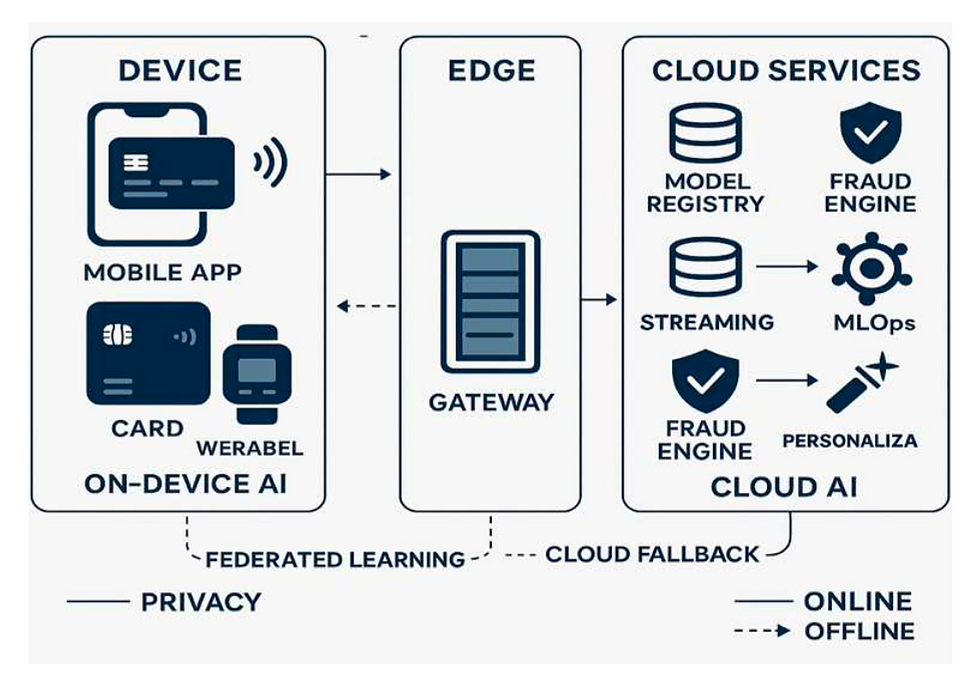

The Proposed Hybrid Architecture

On-Device AI does not eliminate the cloud; it redefines its role. The future of FinTech intelligence lies in a thoughtfully designed Hybrid 3-Layer Architecture.

On-Device AI Layer - Intelligence at the Edge: This layer performs real-time inference directly on the user’s device. Models are optimized for efficiency and speed. Typical capabilities include:

Behavioral fraud detection using touch patterns, keystroke cadence, and device posture

Micro credit assessments for contextual approval decisions

Personalized financial nudges and spending insights

Transaction anomaly detection before authorization

This layer delivers immediacy and contextual awareness.

Edge Gateway Layer - Coordination & Control: The edge layer acts as a secure orchestration and validation hub. It enables:

Federated learning coordination across distributed devices

Secure model updates and validation

Escalation of high-risk transactions to centralized systems

Policy enforcement and version governance

This layer ensures distributed intelligence remains aligned, secure, and continuously improving.

Cloud AI Layer - Training, Governance & Scale: The cloud retains its critical role in:

Centralized model training and retraining

Enterprise risk governance and compliance controls

Bias detection and fairness monitoring

Large-scale data aggregation (where permitted)

Advanced analytics and experimentation

The cloud evolves from a real-time decision engine into a strategic intelligence backbone.

Core FinTech Use Cases

Real-Time Fraud Detection

On-device models can analyze behavioral biometrics such as:

Touch pressure and swipe velocity

Session timing irregularities

Device orientation anomalies

Micro-gesture deviations

Fraud signals are evaluated before the transaction request leaves the device, enabling preemptive protection rather than reactive defense.

Intelligent Credit Line Decisions

Micro risk-scoring engines embedded within mobile apps can provide contextual approvals based on:

Transaction behavior patterns

Payment consistency signals

Real-time cash flow insights

This allows for dynamic, personalized credit line adjustments while reducing central processing bottlenecks.

Hyper-Personalized Financial Experiences

Personalization becomes immediate and contextual:

Real-time spend categorization

Savings nudges triggered by transaction intent

Reward optimization based on behavioral clusters

Since much of this processing occurs locally, personalization improves without increasing centralized data exposure.

Low-Connectivity Financial Inclusion

In emerging markets or rural geographies where connectivity is intermittent, on-device inference allows:

Offline credit scoring

Secure transaction verification

Delayed synchronization once connectivity is restored

This expands access to financial services without requiring continuous infrastructure dependency.

Trust & Privacy: Built-In by Design

Trust is not a feature - it is an architectural principle. On-Device AI strengthens privacy posture through:

Data minimization and localized processing

Reduced transmission of raw behavioral data

Encrypted federated learning updates

Differential privacy noise injection

Edge-level explainability modules for transparency

By limiting centralized data accumulation, institutions reduce systemic risk while aligning with global regulatory trends such as GDPR, CCPA, and emerging AI governance frameworks.

Key Challenges

Despite its promise, On-Device AI introduces non-trivial engineering complexities.

Model Optimization: Edge models must be compressed without compromising accuracy. Techniques include quantization, pruning, and knowledge distillation.

Federated Learning Complexity: Secure aggregation, adversarial robustness, and fairness drift across distributed populations remain active areas of research.

Governance & Explainability: Financial institutions must ensure auditability and regulatory transparency even when decisions occur locally.

Device Constraints: Battery consumption, hardware fragmentation, OS variability, and secure enclave limitations demand adaptive deployment strategies. These challenges require cross-disciplinary collaboration across AI engineering, cybersecurity, regulatory compliance, and product design.

Open Research Areas

The future of distributed financial intelligence will be shaped by breakthroughs in:

Quantum-safe encryption for federated updates

Zero-knowledge proof-based model validation

Energy-efficient edge transformers

On-device LLM distillation for conversational finance

Adaptive bias correction in decentralized systems

Advances in these areas will determine how scalable and trustworthy edge intelligence becomes.

Strategic Implications for Enterprises

On-Device AI represents more than a technical shift - it’s a strategic realignment. Organizations that embrace distributed intelligence can:

Move from reactive fraud mitigation to proactive fraud prevention

Balance privacy with performance rather than treating them as trade-offs

Reduce cloud compute costs over time

Increase customer trust through visible transparency

Differentiate through ultra-low-latency experiences

Enterprises must rethink governance, talent strategy, infrastructure investment, and risk frameworks to capitalize on this paradigm.

The Road Ahead

Next-generation FinTech ecosystems will be defined not by bigger data centers - but by smarter devices. On-Device AI reimagines financial intelligence as:

Instant

Context-aware

Privacy-preserving

Energy-efficient

Trust-centric

As distributed intelligence matures, the institutions that succeed will be those that architect for trust, optimize for immediacy, and design for a world where intelligence lives everywhere - not just in the cloud.

Author Disclaimer: The views and opinions expressed herein are those of the Author alone and are shared in a personal capacity, in accordance with the Chatham House Rule. They do not reflect the official views or positions of the Author’s employer, organization, or any affiliated entity.

Comments